LLMs can't read the room

Some leadership responsibilities can never be outsourced to AI - no matter how compelling it seems.

I wrote this piece for LinkedIn initially, but wanted to share it here as a continuation of my musings on AI and the workforce. The rate of change is incredible, and more and more people are living and breathing AI, so finally we can move from armchair philosophy to real lived experience. What’s yours?

This week I spent time with one of the most technically ambitious investment firms I have come across. They are building a fully algorithmic VC process: probabilistic models, a decision engine that scores founder experience, education, network evolution, market fit. The work is impressive.

I asked what the real bottleneck was. I was imagining it would be a data pipeline, or some technical capability that wasn’t advanced enough, but I was wrong. It was “getting enough face time with founders.”

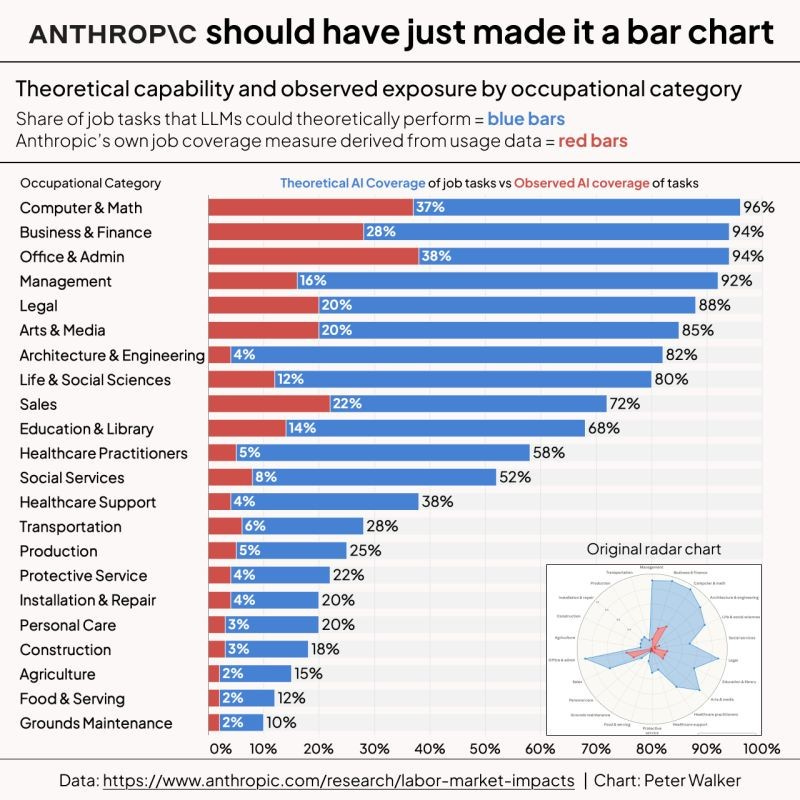

Anthropic published research this month tracking AI’s actual economic footprint. The headline numbers are pretty striking: AI can theoretically handle 94% of tasks for computer programmers. For every 10 percentage point increase in a role’s AI coverage, projected employment growth drops 0.6 points through 2034.

The more useful number: 52% of AI interactions are augmentation, not automation. Success rates on complex tasks sit at 66%.

It’s getting clearer and clearer that AI can absorb a significant portion of human judgment, faster and at scale, without ego or anchoring bias. For many decisions, that beats the human alternative. AI definitely has biases from its training data and its trainers, so in essence we’re trading one form of human problems for another. Different is not none.

At Daylight, I ran a mission-driven fintech with an explicit ambition to build the most diverse team in financial services. In practice, that created one of the hardest people challenges I have navigated.

Our team members had multiple overlapping identities. Each had a deeply held conviction about what the mission meant, and none of them were identical. Two distinct orientations that rarely spoke the same language: one more business-oriented, another more justice-oriented.

This created a translation problem that neither side could quite solve: the fiduciary, numbers-first framing I naturally lead with, and the more emotive, mission-led lens that many of our best people brought to everything. Both perspectives were necessary, but it was hard to know when to flex one over the other in any given situation. Do I talk to an investor about mission, or CAC? What about the marketing team? Get it wrong and I might as well have been speaking different languages.

Today I find LLMs are useful translation tools for this kind of situation. We work with clients dealing with burnout, fractured teams, or communications that keep landing wrong. These problems keep appearing in slightly different guises. AI helps me get my message across in a way that works for the recipient, not just in the way my brain constructs it.

But that only works because the judgment is still mine. Knowing what needs to be said. Knowing who I am saying it to. Knowing the moment. The model helps me execute on it. It cannot replace the act of putting myself in someone else’s shoes.

The same logic applies to that VC firm.

Their model can assess founder quality across hundreds of signals. But when you are deciding whether to back a specific person, to tie your reputation and capital to this human being for the next five years, something else is happening. You can have all the data in the world, but does this person light up the room? Does their ambition translate into a real sales environment? Are they charismatic enough to sell the vision? For some things you just need to read the room.

This comes up constantly in the capability model work I do on AI-enabled automation and enhancement. Work is often about social matters and as a dear friend always says, “people buy from people.“

Recruiting. Sales. Stakeholder trust. Culture formation. AI can and should help with all of these. But at the moment of decision, you are still a social animal making a social bet.

The most algorithmic investment fund I know still needs to sit across from a founder and decide if they want a relationship.

You still need to sell. You still need to hire. Build your AI capability model around that.

As you use AI more and more, I’m curious: where are you finding the boundary between AI and human judgement?

Rob Curtis is Managing Director of Crucible Hill Partners, working with founders, investors and leadership teams at inflection points on the operational challenges that matter. If this raised a question about your own capability model, get in touch.